.

Béguin A. (4), Rodzinka T. (1), Dionis E. (2), Calmels L. (1), Beldjoudi S. (1), Minjeong K. (3), Curti J. (3), Guéry-Odelin D. (1), Sugny D.(2), Allard B. (1), Gauguet A. (1), and Kasevich M. (3)

(1) Laboratoire Collisions Agrégats et Réactivité, France

(2) ICB Institut Carnot de Bourgogne, France

(3) Department of Physics, Stanford University, USA

(4) LTE, Observatoire de Paris, Université PSL, Sorbonne Université, Université de Lille, LNE, CNRS, France

ashley.beguin@obspm.fr

Efficient control of atomic coherence in cold atom systems has established atom interferometry as a powerful tool for quantum sensing and precision measurements. One of the key challenges today is scaling these interferometers to much larger dimensions. In particular, ongoing international efforts targeting gravitational wave detection and dark matter exploration aim to reach momentum separations of several thousand photon recoils between the interferometer arms [1]. Achieving such regimes calls for the development of new experimental techniques required by the next generation of atom interferometers.

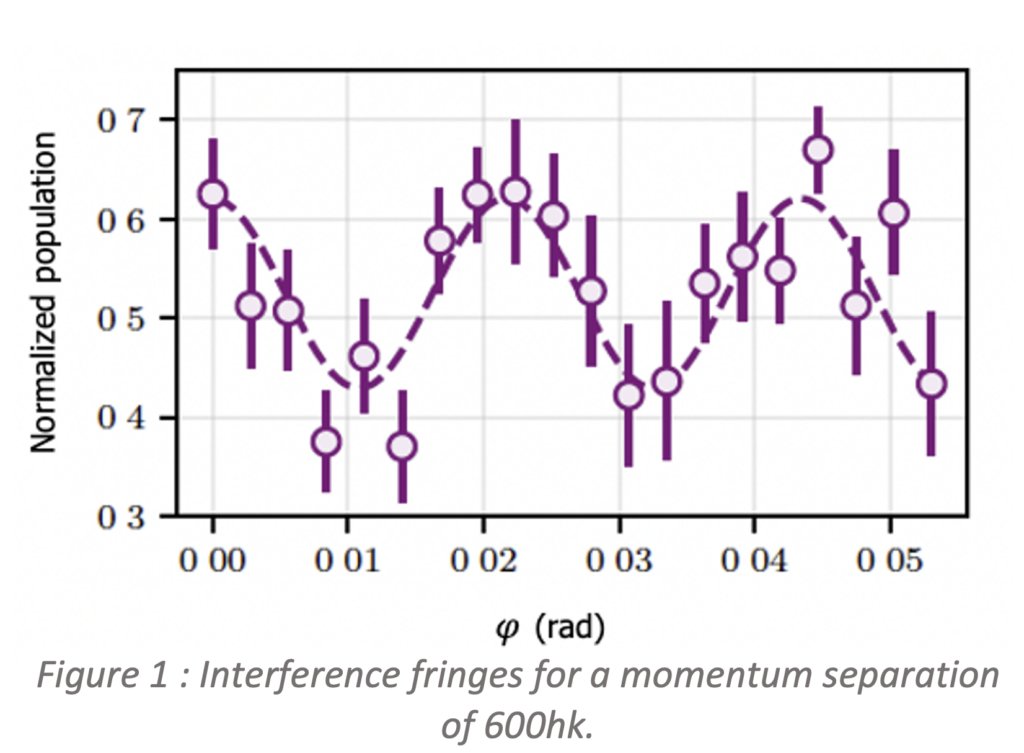

We present a novel beam-splitting approach based on the stroboscopic stabilization of quantum states in an accelerated optical lattice. This technique relies on Floquet formalism and optimal control theory to allow coherent acceleration of a Bose–Einstein condensate in an optical lattice. This method has enabled the experimental demonstration of an atom interferometer achieving a record total momentum transfer of 600 photon recoils (Fig 1) [2]. The performance of this experiment is currently limited only by identified technical constraints, in particular the finite size of the experimental apparatus and spontaneous emission during the interaction between the optical lattice and the atoms, suggesting that the fundamental limits of these acceleration sequences [3] should be within reach. This method could realized its full potential in large-scale atom interferometers, such as the 10-meter-tall rubidium fountain at Stanford, enabling access to new regimes and advancing efforts toward achieving macroscopic wave-packet separation between the interferometer arms, with promising applications in precision metrology.

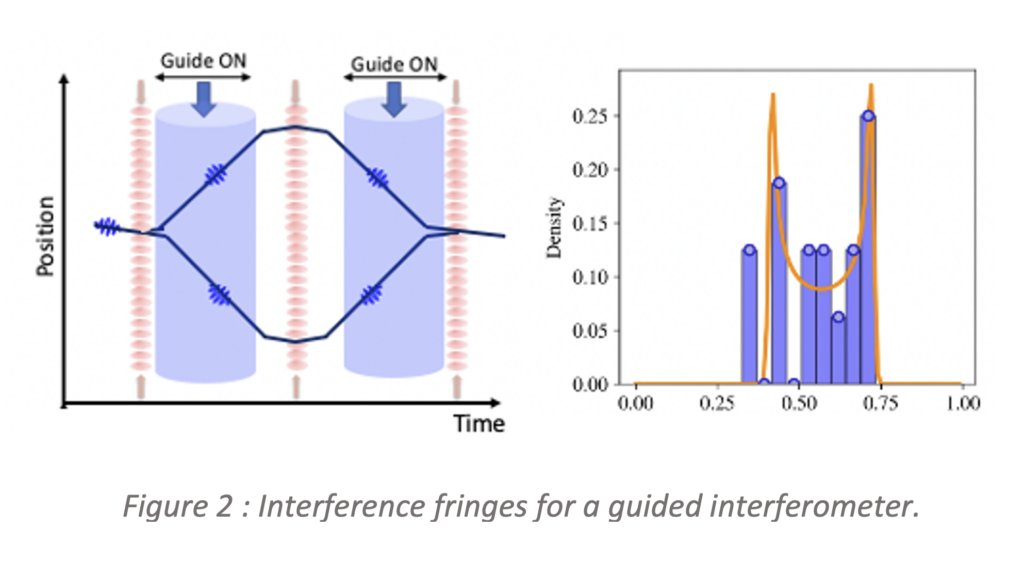

Such large-scale interferometers are, however,

increasingly sensitive to systematic phase shifts,

which can result from the transverse expansion of

the atomic cloud. Controlling this transverse

motion is therefore crucial to maintain the

interferometer’s performance. Guided atom interferometers offer a promising approach to mitigate these systematics. In this configuration, atoms are confined within a vertical optical beam throughout the interferometer sequence, limiting their transverse expansion even during long interrogation times. Consequently, the required optical lattice beam size and laser power can be reduced. Moreover, this setup could help to suppress some systematic effects, including wavefront distortions, inhomogeneities in the Bragg laser beams, or Coriolis-induced phase shifts arising from the transverse velocity of the atomic cloud.

These developments pave the way toward a new generation of large-scale atom interferometers with enhanced sensitivity, enabling novel tests of fundamental physics tests.

[1] A.Abdalla et al, arXiv : 2412.14960 (2024).

[2] T.Rodzinka et al, NatComm, 15, 10281 (2024).

[3] A. Alibabaei et al., arXiv:2602.00365 (2026).